Rewarding good practice in research

Whenever I’m involved in a discussion about how to encourage researchers to adopt new practices, eventually someone will come out with some variant of the following phrase:

“That’s all very well, but researchers will never do XYZ until it’s made a criterion in hiring and promotion decisions.”

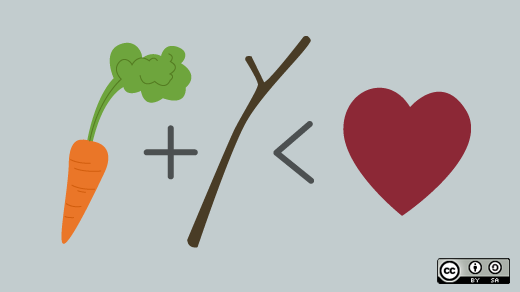

With all the discussion of carrots and sticks I can see where this attitude comes from, and strongly empathise with it, but it raises two main problems:

- It’s unfair and more than a little insulting to anyone to be lumped into one homogeneous group; and

- Taking all the different possible XYZs into account, that’s an awful lot of hoops to expect anyone to jump through.

Firstly, “researchers” are as diverse as the rest of us in terms of what gets them out of bed in the morning. Some of us want prestige; some want to contribute to a greater good; some want to create new things; some just enjoy the work.

One thing I’d argue we all have in common is this: nothing is more offputting than feeling like you’re being strongarmed into something you don’t want to do.

If we rely on simplistic metrics, people will focus on those and miss the point. At best people will disengage and at worst they will actively game the system. I’ve got to do these ten things to get my next payrise, and still retain my sanity? Ok, what’s the least I can get away with and still tick them off. You see it with students taking poorly-designed assessments and grown-ups are no difference.

We do need to wield carrots as well as sticks, but the whole point is that these practices are beneficial in and of themselves. The carrots are already there if we articulate them properly and clear the roadblocks (don’t you enjoy mixed metaphors?). Creating artificial benefits will just dilute the value of the real ones.

Secondly, I’ve heard a similar argument made for all of the following practices and more:

- Research data management

- Open Access publishing

- Public engagement

- New media (e.g. blogging)

- Software management and sharing

Some researchers devote every waking hour to their work, whether it’s in the lab, writing grant applications, attending conferences, authoring papers, teaching, and so on and so on. It’s hard to see how someone with all this in their schedule can find time to exercise any of these new skills, let alone learn them in the first place. And what about the people who sensibly restrict the hours taken by work to spend more time doing things they enjoy?

Yes, all of the above practices are valuable, both for the individual and the community, but they’re all new (to most) and hence require more effort up front to learn. We have to accept that it’s inevitably going to take time for all of them to become “business as usual”.

I think if the hiring/promotion/tenure process has any role in this, it’s in asking whether the researcher can build a coherent narrative as to why they’ve chosen to focus their efforts in this area or that. You’re not on Twitter but your data is being used by 200 research groups across the world? Great! You didn’t have time to tidy up your source code for github but your work is directly impacting government policy? Brilliant!

We still need convince more people to do more of these beneficial things, so how? Call me naïve, but maybe we should stick to making rational arguments, calming fears and providing low-risk opportunities to learn new skills. Acting (compassionately) like a stuck record can help. And maybe we’ll need to scale back our expectations in other areas (journal impact factors, anyone?) to make space for the new stuff.

Webmentions

You can respond to this post, "Rewarding good practice in research", by:

liking, boosting or replying to a tweet or toot that mentions it; or

sending a webmention from your own site to https://erambler.co.uk/blog/rewarding-good-practice-in-research/

Comments

Powered by Cactus Comments 🌵